|

| By Marija Matic |

The world does not feel stable.

Missiles are flying in the Middle East. The Strait of Hormuz — the narrow passage that carries roughly 20% of the global oil supply — is closed.

As my colleague Bob Czeschin explained yesterday, this feeds directly into energy prices, inflation expectations, sovereign budgets and risk assets across the globe.

Markets reacted accordingly. Equities and crypto sold off into the initial headlines. Oil and gold moved higher. Defense stocks outperformed. Airlines and travel names weakened. The U.S. dollar strengthened as capital sought liquidity.

Then, as often happens, prices stabilized. Some losses were retraced.

Bitcoin (BTC, “B+”) is strong today. Despite the surrounding noise, it remains in the relatively narrow range it has traded in for nearly a month, with the $70,000 level acting as resistance.

That strength, in the middle of geopolitical stress, is notable.

I think what is being repriced right now goes beyond oil supply or regional war risk. It includes technological sovereignty.

But I don’t want to talk about Bitcoin now. I want to focus on a conversation I’m not seeing enough online in this context: AI.

Big Brother’s Hand Was Caught in the AI Cookie Jar

Artificial intelligence has become embedded in economic infrastructure at extraordinary speed.

Governments rely on it for intelligence analysis, logistics and cyber operations.

Corporations rely on it for customer service, automation, research and compliance.

Individuals use it for writing, coding, legal interpretation, and increasingly for decision-making assistance.

Once a technology becomes that central, it becomes strategic.

It is no surprise, then, that some decentralized AI projects have begun to decouple from the broader market.

That’s because the reputation of their centralized counterparts is no longer just a matter of corporate ethics. It has been fundamentally compromised by the gravity of Department of Defense (DoD) requirements.

It all became alarming and evident when the DoD requested access to Anthropic’s AI model, Claude.

Why? To use it for mass domestic surveillance and deadly missions with no direct human control.

Anthropic’s CEO Dario Amodei rejected the request, raising tensions at a clear line in the sand. In response, the U.S. government leveraged its most potent bureaucratic weapon and designated Anthropic a "supply chain risk."

This isn’t a mere slap on the wrist; it is a directive for every federal agency and contractor to purge the technology from their stacks.

And that’s not the end of the fallout. Through the Department of Commerce, the state controls the "silicon lifeblood" — the export licenses for Nvidia H100s and H200s that make these models possible.

To be labeled "uncooperative" is to be cut off from the global market overnight.

Furthermore, the threat of the Defense Production Act — a relic of the Korean War — hangs over the industry like a guillotine. It allows the Executive Branch to seize control of technical infrastructure under the banner of national defense.

By framing Anthropic’s refusal as "sabotage" or "unpatriotic," the administration is setting a precedent: safety filters are no longer ethical features. They are national security liabilities.

While Anthropic opted for a legal and ethical standoff, OpenAI and Google chose a more compliant path. Both have decided to integrate their AI models — ChatGPT and Gemini, respectively — to the Pentagon’s “Open Arsenal” infrastructure.

On X, OpenAI CEO Sam Altman added that his company would update its agreement with the DoD to prohibit its system from intentional use in domestic surveillance.

In my opinion, though, this is performative. Once a model is siloed within classified military servers, the "kill switch" is effectively handed over.

Put simply, the company loses the technical ability to monitor prompts or "pull the plug" on specific applications.

That power now rests with the U.S. government.

It’s a linguistic trap. The industry is using the phrase “all lawful purposes” to stretch past a product that’s safe for you and me to use. All because the final authority on the model’s use … is also the final authority on what is lawful.

This allows the DoD to bypass ChatGPT and Gemini’s safety filters for mass surveillance or targeting simply by reclassifying the mission.

We have already seen the blueprint for this in Project Nimbus, Google’s $1.2 billion contract with Israel. Leaked documents revealed an "Anti-Boycott" clause that explicitly forbids Google from halting services due to human rights concerns.

Furthermore, a "Warning Clause" requires Google to notify the state if an international body, like the ICC, requests data — creating a digital shield against global oversight.

The Immediate Response

The response from users was quick.

In both the U.S. and the U.K., use of Anthropic’s Claude AI soared. It climbed to the No. 1 spot among free apps in the Apple Store over the past week.

And just yesterday, Anthropic experienced an outage due to “unprecedented demand,” according to the company.

But for those of us outside the U.S., this "principled" stance by companies like Anthropic offers cold comfort. The red lines currently being debated are almost exclusively domestic. When these CEOs speak of "protections," they are speaking of protections for U.S. citizens.

For the rest of the world, we remain in a separate, unprotected category of "lawful foreign intelligence."

Let’s be clear: Much like Americans, foreigners don’t enjoy using something that can be weaponized against them. If a project claims to be global, but its ethical boundaries stop at a single border, it isn't a global utility. It’s a national asset.

And while Americans may have one project refusing to be weaponized against them, Claude’s red line is simply not red enough for the rest of the world.

The Long-Term Ramifications

The two reactions to the DoD’s request have forced centralized AI firms to choose one of two divergent paths.

The Anthropic Path is one of high-stakes litigation, where a company will sue the government to contest an "unprecedented act of intimidation."

The OpenAI/Google Path is one of quiet compliance, trading unrestricted military access for the right to remain in business.

The collapse of the "unified industry front" — punctuated by the failure of the "We Will Not Be Divided" letter from the employees of OpenAI and Google — proves that centralized entities cannot hold the line against the state.

The solution, therefore, cannot be found in a corporate boardroom. It must be found on the blockchain.

The Rise of Sovereign AI: Venice AI ($VVV)

The "AI-Crypto" sector is currently the only arena trying to build a hardcore technical defense against this encroachment.

While centralized giants rely on "principles" that can be overridden by a subpoena or a secret contract, decentralized projects rely on the immutable logic of mathematics.

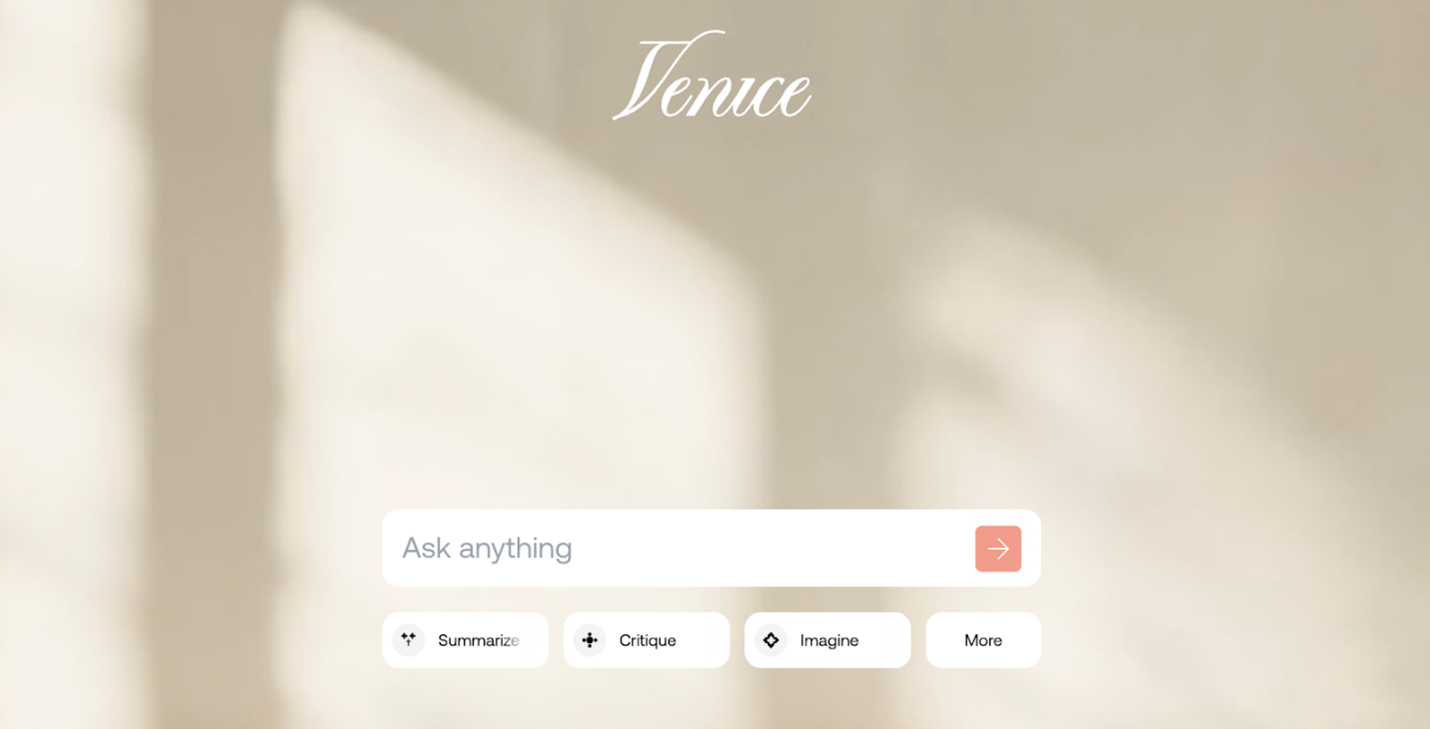

And in this environment, Venice AI (VVV, “B-”) is emerging as the most viable “retail” alternative for those seeking an exit from the surveillance state.

It’s a sovereign counterpart to the increasingly compromised ChatGPT.

Led by Erik Voorhees, Venice operates as a privacy-first gateway. Unlike Gemini or ChatGPT, which function as "identity-first" platforms, Venice decouples the user from the prompt.

Through a strict "no-logs" policy and the use of locally encrypted routing, the platform ensures that the data never exists on a server where it can be seized or analyzed by third parties.

If the data does not exist, it cannot be handed over.

However, the project still faces the "physical" risk of centralized data centers. This is where the VVV token enters the equation.

The VVV token has recently undergone a significant shift in its economics. It cut annual emissions to 6 million tokens — a move that materially tightens supply.

Combined with deep integration into the DeFi ecosystem — Aerodrome (AERO, “C-”) for liquidity and Morpho (MORPHO, “C”) for collateral — the token has begun to evolve from a simple utility into a cornerstone of "sovereign compute."

By incentivizing a shift toward decentralized compute and creating a permissionless utility model, Venice is attempting to decentralize the whole design.

The market is starting to price in this necessity:

The 196% rally in the last 30 days isn't just speculative fervor; it is a flight to safety.

OpenAI's military dealings are acting as a direct catalyst for this rally, as users seek out "exit" technologies.

In a world where "lawful use" is a moving target and "supply chain risk" is a political weapon, the only true privacy is the one you own.

If you want to lead private conversations with AI that cannot be weaponized against you, the decentralized space is no longer an alternative.

It is the only rational choice.

While Venice AI provides the gateway to this world today, it is just the beginning of a broader shift toward Sovereign Compute.

Beyond just "chatting" privately, the next frontier might be projects like Morpheus (MOR, “E+”) — a peer-to-peer network for "Smart Agents" that answer only to you, without a central kill-switch.

It’s a smaller and riskier play than Venice, but with great upside potential.

With decentralized AI projects like these, you can move the "red line" from the corporate boardroom to the blockchain.

Keep your eyes on this space; the exit is being built in real-time.

Best,

Marija Matić